felipepepe

As I said in my previous post: if you want to have a somewhat accurate ranking therefore you need a way to measure how distorted the results are.

You will probably not like this but here is my proposal.

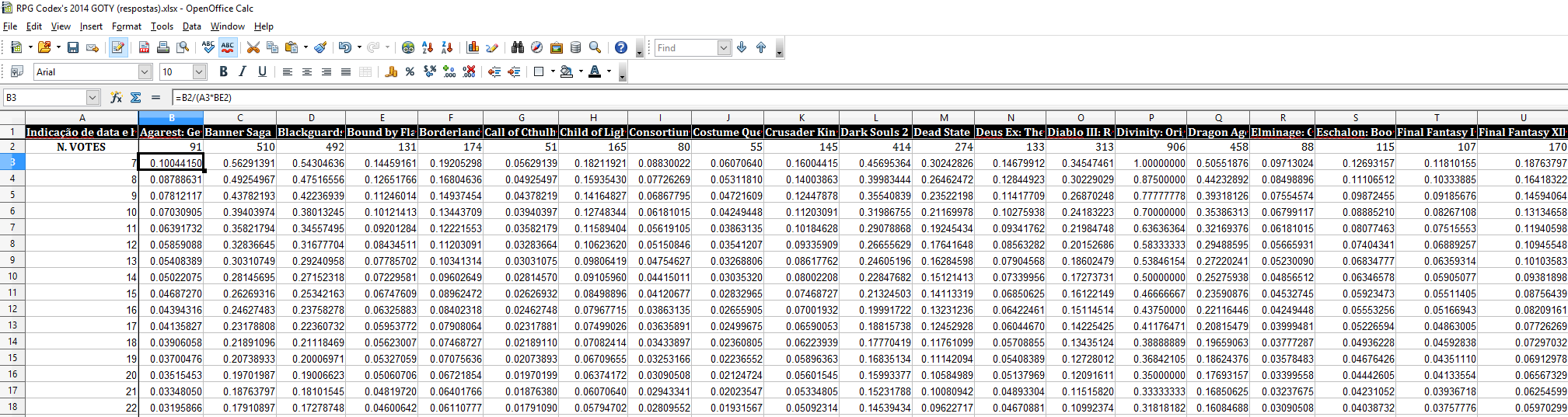

For example: For 2014 we know that DOS has the most votes (906) but we also know that the ranking for DOS is the most skewed for m=7 therefore we have the distortion reference factor r = 906/7 = 129.4285714285714 which represents 100% of distortion.

Now, for each m we compute the distortion percentage for each game in relation to the reference described above: (number of votes / m) / r . This will produce a distortion percentage between 0 and 1 (or 0-100% versus the maximum distortion possible).

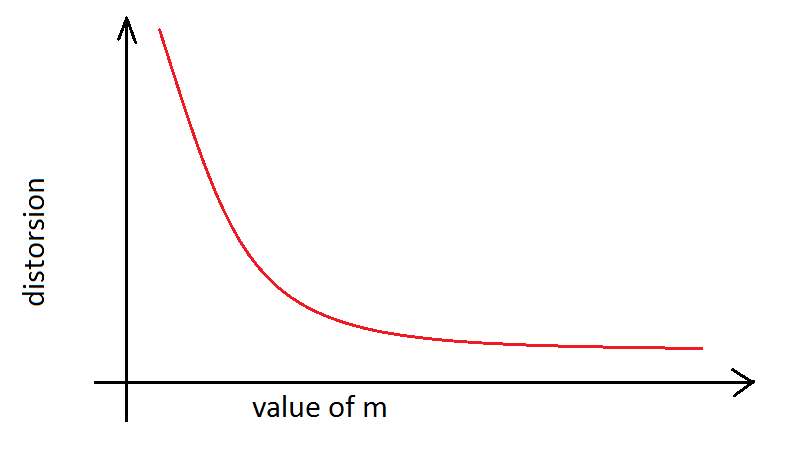

As you can see above: distortion percentage will decrease while m is increasing.

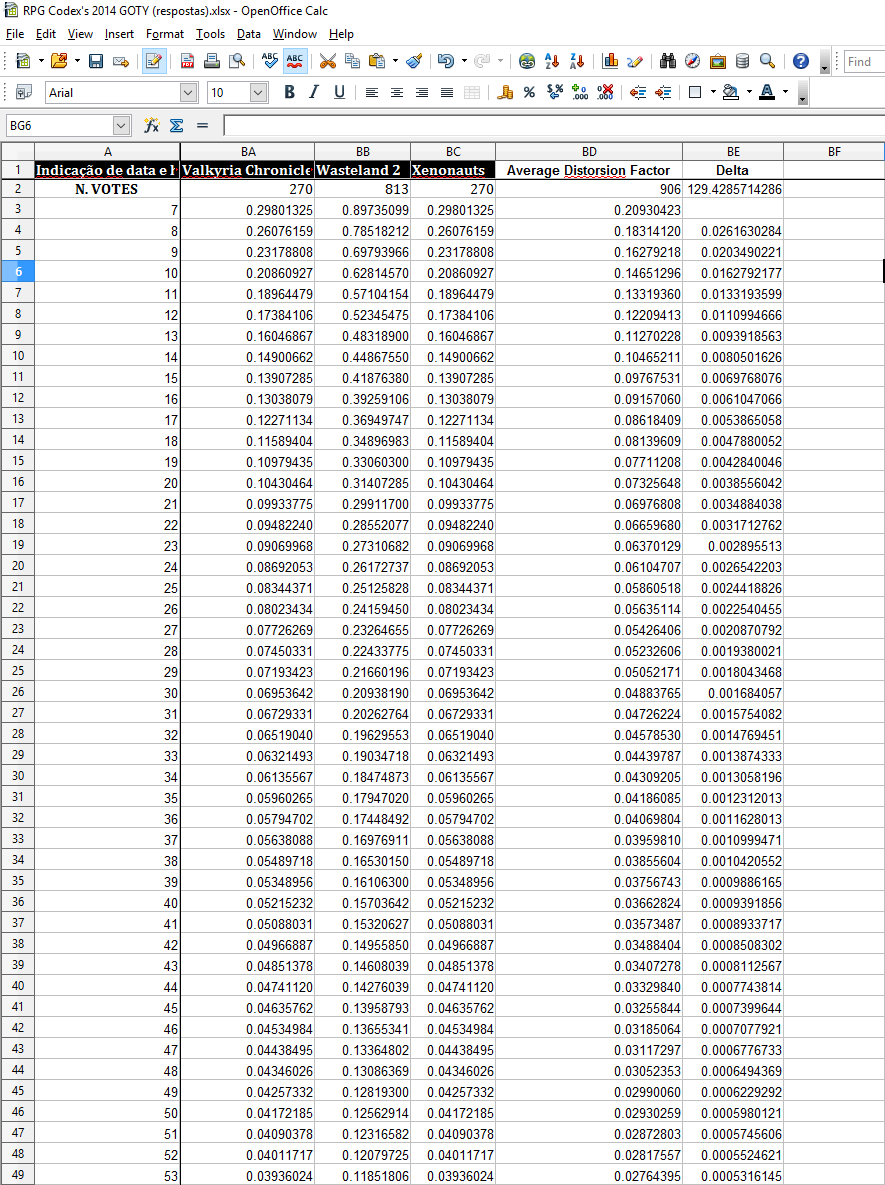

Anyway the next step was to compute the average distortion percentage for the entire sample (look below).

All these computations will not give us the correct m but they could help up identify an adequate m.

And based on the data above and considering that we cannot increase m forever (it will skew the rating for low voted games): I can say that any m value between 27 and 39 would bring results more accurate than m = 7.

In other words, if you want an easy and upfront defined criteria then you can say:

m will be equal to the number of votes which will provide average distortion under 5% (m=30) or

m will be equal to the number of votes which will provide average distortion gain under 3 decimals (m=39).

Just to be clear: I don't assume that my method is perfect or the only one. In fact I know that for a complete solution you have to use two distortion factors: one for low voted games and one for high voted game. But I cannot be bothered to design one method because I have doubts you will accept even this one (which is much simpler).

However considering that there is no way to obtain perfect results (some distortion will always be present) I think is acceptable if you use one the criteria defined above. It might not be the perfect solution but they really can help you decide on selecting an adequate m.

Anyway, this is my last input on this topic. Maybe I used the wrong tone several times and I apologize.

Wasteland 2 and Legend of Grimrock 2 were clearly disadvantaged last year. Shouldn't we try to avoid the same situation? *Some morons only see my sublime butthurt but the fact it: it happened not because I did not vote for them but because of the methodology.

I don't think I'm unreasonable about this considering that you do have the means to improve the methodology. Use them.

![Glory to Codexia! [2012] Codex 2012](/forums/smiles/campaign_tags/campaign_slushfund2012.png)